Code Virtualization for .NET: How It Works and When to Use It

Code virtualization is the strongest protection technique available for .NET assemblies. It is also the most misunderstood - frequently confused with application virtualization or described in vague terms like "converts your code to a proprietary format." This page explains precisely what it does, how it works at the bytecode level, what an attacker sees when they encounter it, and how to apply it selectively to the methods where it matters most.

What makes virtualization different from other obfuscation techniques

Every .NET obfuscation technique - renaming, string encryption, control flow obfuscation, and virtualization - produces a valid .NET assembly containing CIL that conforms to the ECMA-335 specification. The difference is what that CIL represents.

With renaming, string encryption, and control flow obfuscation, the output CIL is a transformed version of your original method bodies. The instructions are still standard CIL opcodes. Decompilers like ILSpy and dnSpy know how to read them. Renaming removes meaningful names; control flow obfuscation scrambles the branching structure - but the logic of your code is still present in CIL form and can be reconstructed.

Code virtualization changes what the CIL represents. The output assembly is still valid CIL throughout - but the CIL of the original method body is gone, replaced by a small stub that invokes an embedded virtual machine. The VM interpreter is itself CIL. What disappears is the logic of your original method: it no longer exists as CIL anywhere in the assembly. Instead it lives as an encoded byte buffer that only the embedded VM knows how to execute.

A decompiler can reconstruct the VM interpreter stub perfectly. What it cannot reconstruct is the original method - because the original method is no longer there.

Why I built a VM for .NET

I have known about code virtualization as a protection technique for a long time - I am personally acquainted with the developer of VMProtect, which applies the same principle to native unmanaged code. Watching how effectively it raised the cost of reverse engineering for native applications made the idea of bringing it to .NET obvious. The principle is the same: replace the original code with a custom bytecode that only your VM knows how to execute.

What I did not fully anticipate was how much work lay in the implementation details. The VMProtect developer had warned me that exception handling was the hardest part - and he was right. .NET's exception handling model, with its nested try/catch/finally blocks, requires precise control over where execution resumes after an exception. When you are interpreting CIL rather than executing it natively, you have to replicate that behavior yourself: catching exceptions at the right point in the interpreter loop, unwinding the VM stack correctly, transferring control to the right finally block, and doing all of this in a way that the .NET runtime considers valid. Getting this right took significant time and taught me more about CIL's execution model than any documentation had.

The first version of the VM was also far too slow. My initial approach was to implement each CIL opcode as a C# method - clean, readable, easy to reason about. The overhead of calling a C# method for every single bytecode instruction made it impractical. I had to rebuild it by emitting CIL instructions manually for the interpreter loop, carefully controlling every allocation and branch. That experience gave me a deep appreciation for why interpreted runtimes are hard to make fast.

For the tooling, I chose Mono.Cecil to read and write .NET assemblies - it is genuinely well-designed for this kind of work, and a significant number of .NET obfuscator vendors use it for the same reason. dnLib is worth knowing as an alternative; it handles some edge cases differently and some developers prefer it. Learning to build a CIL interpreter also meant learning the parts of the specification that rarely come up in normal development: CIL code verification rules, the restrictions on ref structs (which cannot be boxed and therefore cannot live on a general-purpose VM stack), and the precise semantics of branching instructions. The current limitations around open generic methods and ref-struct parameters are direct consequences of these constraints - not oversights, but the boundary of what is implementable without significantly more complexity.

How virtualization works: compile time

When ArmDot virtualizes a method, the process happens in two stages - first at build time, then at runtime.

At build time, ArmDot reads the CIL of the selected method and recompiles it into a custom instruction set. Each standard CIL opcode is mapped to a custom opcode whose encoding is unique to that method. Operands are encoded in a proprietary format and placed into a static byte buffer that becomes part of the assembly. The original CIL method body is replaced with a small stub that invokes the embedded VM and passes it the buffer.

Two properties of this encoding matter for security. First, each method in the assembly gets a different opcode mapping - an attacker cannot analyze one virtualized method and apply that knowledge to another. Second, the encoding changes every time the project is built - the same method compiled today produces a different bytecode than the same method compiled yesterday. There is no stable mapping to reverse-engineer across builds.

The VM itself is stack-based, matching the execution model of .NET's own CIL runtime as defined in ECMA-335. This is an important design choice: it allows the VM to faithfully replicate the semantics of every CIL instruction, including complex operations involving the evaluation stack, local variables, and exception handling, without needing a fundamentally different execution model.

How virtualization works: runtime

At runtime, when the protected method is called, execution enters the VM stub. The VM reads the encoded instruction stream from the byte buffer, decodes each instruction according to the method-specific opcode table, and executes it using its own stack and local variable storage.

From the outside, the method behaves identically to the original. Inputs produce the same outputs. Exceptions propagate normally. The .NET runtime does not know or care that the method body is being interpreted rather than JIT-compiled - the VM produces valid .NET operations throughout.

What the .NET runtime never sees is the original CIL. The JIT compiler never touches the protected method body. The original logic exists only as encoded bytes in a buffer, interpreted by a VM whose design is not documented anywhere.

What a decompiler actually sees

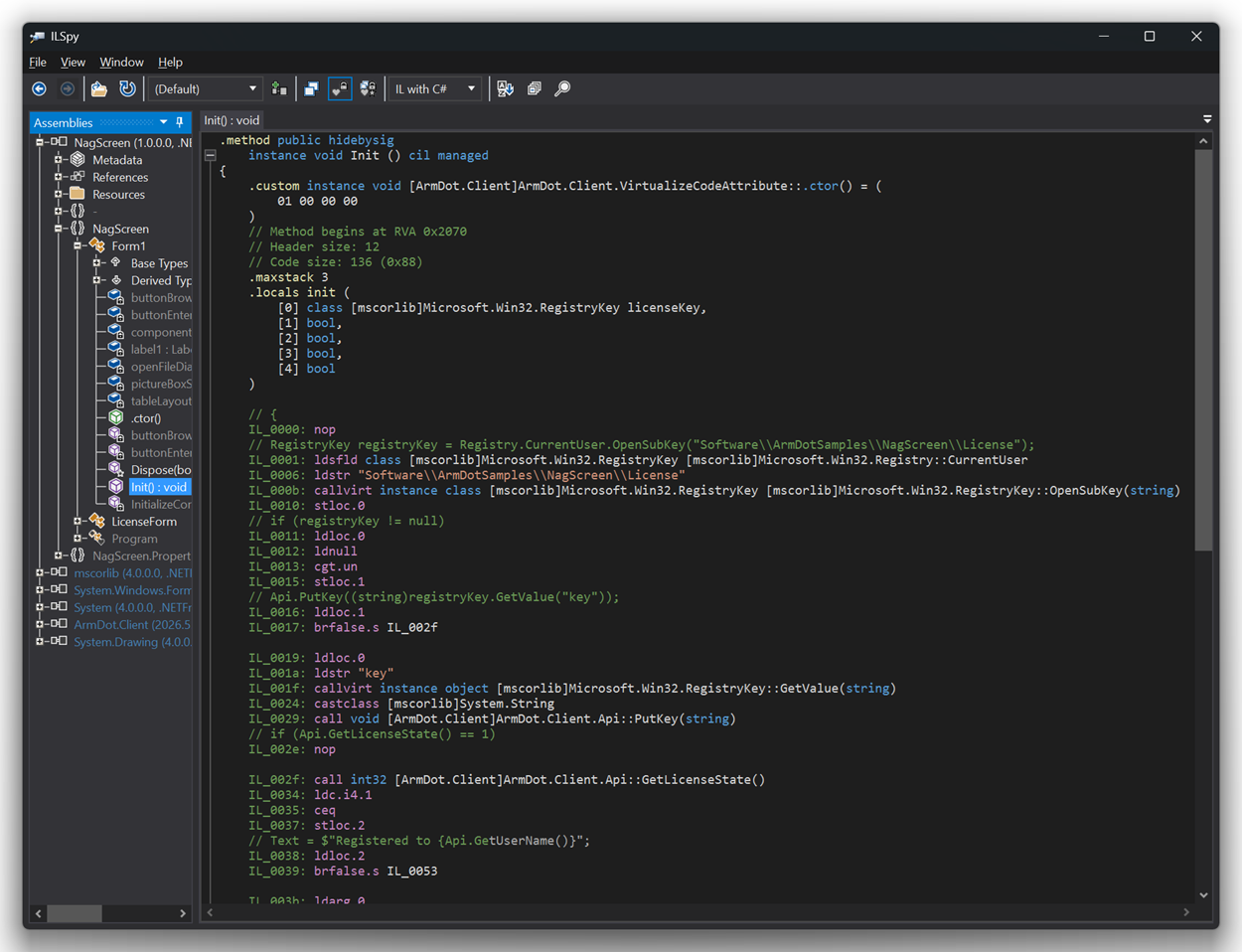

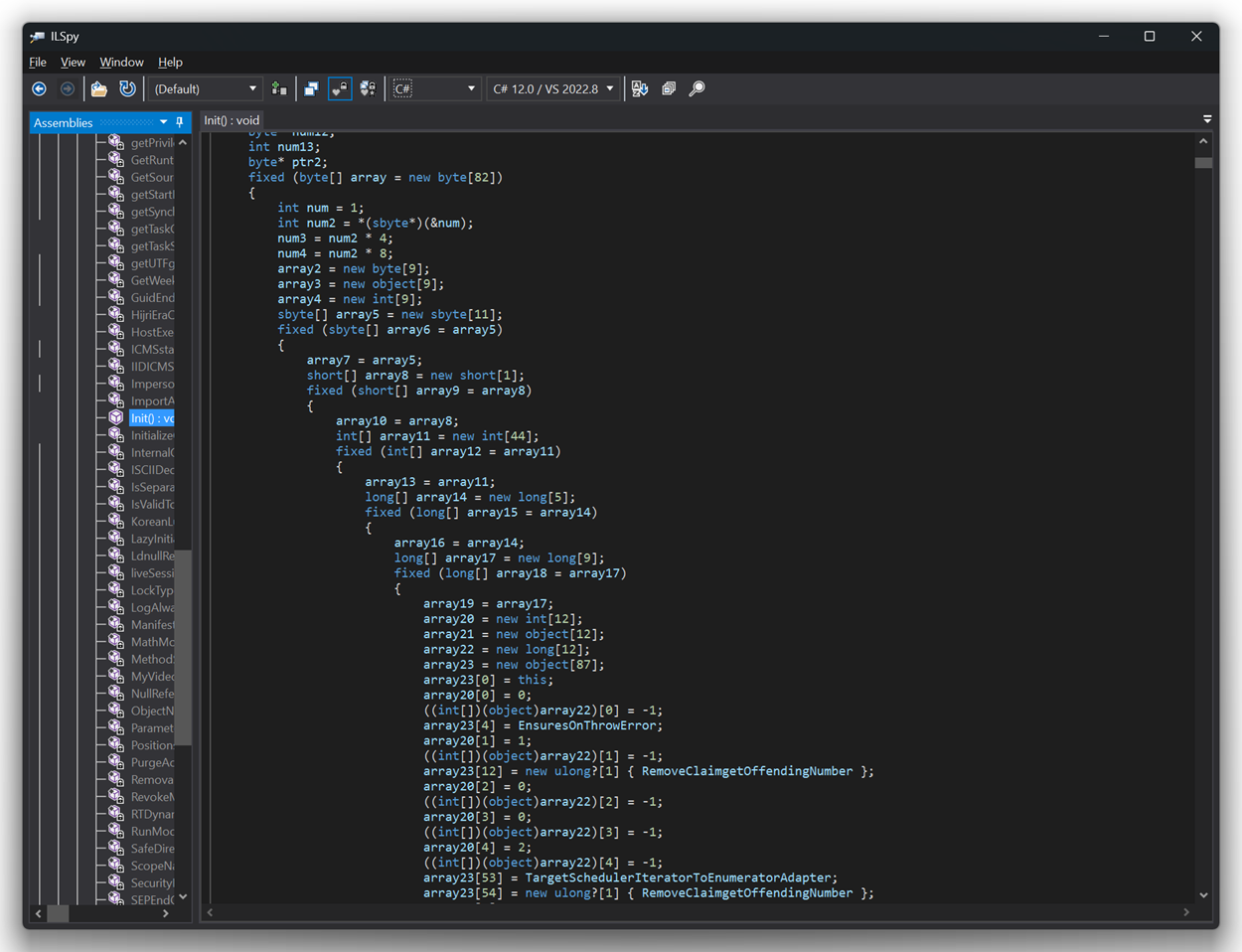

Open an unvirtualized .NET assembly in ILSpy and you see reconstructed C# that closely resembles the original source. Open a virtualized method and you see the VM interpreter loop - a small state machine that reads bytes, dispatches on encoded opcodes, and updates a stack pointer. The method's actual logic is invisible.

This is qualitatively different from control flow obfuscation, where the logic is present but scrambled. With virtualization, the logic is simply absent from anything a decompiler can reach.

Why static analysis fails

Static analysis tools - decompilers, disassemblers, automated reverse engineering frameworks - work by pattern-matching known instruction sequences. They know what CIL opcodes look like, what patterns the C# compiler produces for common constructs, and how to reconstruct control flow from branch instructions.

None of that knowledge applies to virtualized code. The custom opcodes have no public specification. Their meaning differs between methods and between builds. A static analyzer looking at the byte buffer sees an opaque blob with no known structure to match against.

The only thing static analysis can recover is the VM interpreter itself - the small stub that reads and dispatches the bytecode. An analyst can study the interpreter and understand its general structure. But understanding the interpreter does not reveal what the bytecode means without also reverse-engineering the per-method opcode table, which is embedded in the buffer in encoded form.

For most attackers, this barrier is sufficient. For a highly motivated analyst with deep .NET expertise and unlimited time, it raises the cost of analysis from hours to weeks or months - which is the realistic goal of any protection strategy.

Why dynamic analysis is much harder

When static analysis fails, attackers fall back on dynamic analysis: running the application under a debugger, setting breakpoints, and observing what the code does at runtime. Standard CIL methods are straightforward to debug - set a breakpoint at any instruction, inspect the stack and locals, step through execution.

Virtualized methods change this significantly. The execution path goes through the VM interpreter, not through standard CIL instructions. A debugger can observe that the VM is executing, can see the byte buffer being read, and can observe what values are placed on the VM's stack - but it cannot easily correlate these observations with the original method logic without first reconstructing the per-method opcode mapping.

An analyst can in principle instrument the VM interpreter itself to log every operation, building a trace of the method's behavior. This is possible but requires significant effort - and because the opcode mapping differs per method and per build, the analysis does not transfer. Each build and each method requires starting over.

ArmDot does not include a dedicated anti-debug feature - resistance to dynamic analysis is a property of the virtualization itself rather than explicit debugger detection. A sufficiently patient analyst with enough time can still extract the logic of a virtualized method. What virtualization provides is a dramatically higher cost floor, not an absolute barrier.

Performance: the real numbers

Virtualization has a real performance cost. The VM interpreter adds overhead to every instruction - instead of JIT-compiled native code, you get interpreted bytecode with dispatch overhead on each operation.

The exact overhead depends entirely on what the method does. For a method that performs intensive numerical computation - many arithmetic operations in a tight loop - the overhead compounds dramatically. In a benchmark using SHA-256 hashing (1,000 iterations), virtualization produced a 6,779% slowdown compared to unobfuscated code. That is the worst case: a compute-heavy method where every instruction matters.

For methods that are not compute-intensive - license validation, configuration parsing, UI initialization, serial key checking, startup routines - the overhead is typically imperceptible to the end user. A license check that runs once at startup and takes 2ms unobfuscated might take 100ms virtualized. The user does not notice. The attacker faces a qualitatively different reverse engineering problem.

For a detailed breakdown of how different obfuscation techniques compare on performance, including the benchmark code and GitHub project, see Does obfuscation affect performance?

The practical conclusion is always the same: virtualize selectively. Protect the methods where your IP and security logic live. Leave the hot paths - rendering loops, data processing pipelines, cryptographic primitives called millions of times - under lighter techniques or no obfuscation.

How to apply code virtualization in ArmDot

ArmDot uses the [VirtualizeCode] attribute from the ArmDot.Client NuGet package. The attribute can be applied at method, class, or assembly level, and inherits downward by default.

Virtualizing a single method:

using ArmDot.Client;

class LicenseManager

{

[VirtualizeCode]

public bool ValidateSerialKey(string key)

{

// This method body will be virtualized

// ...

}

}Virtualizing an entire class:

[VirtualizeCode]

class LicenseManager

{

// All methods in this class will be virtualized

public bool ValidateSerialKey(string key) { ... }

public bool IsTrialExpired() { ... }

public string GetHardwareId() { ... }

}Virtualizing an entire assembly and excluding specific methods:

// In AssemblyInfo.cs or any source file

[assembly: VirtualizeCode]

// In a performance-critical class:

class ImageProcessor

{

[VirtualizeCode(Enable = false)]

public void ProcessFrame(byte[] pixels)

{

// Excluded from virtualization - too compute-intensive

}

}The Enable and Inherit properties control the inheritance behavior. Setting Enable = false on a method excludes it from virtualization even when a parent class or assembly-level attribute would otherwise include it. Setting Inherit = false stops the attribute from propagating to child items.

ArmDot removes all [VirtualizeCode] attributes from the output assembly after processing - the protected binary contains no trace of which methods were virtualized or why.

Current limitations

Certain method types cannot currently be virtualized by ArmDot. When ArmDot encounters such a method with [VirtualizeCode] applied, it emits an ARMDOT0005 warning and skips virtualization for that method - it does not fail the build.

Open generic methods cannot be virtualized. A generic method whose type parameters are not fully resolved at the call site (for example, void Process<T>(T item) where T varies at runtime) presents challenges for the VM's type handling that are not yet implemented. Non-generic methods and closed generic instantiations are not affected.

Methods with ref-struct parameters or locals cannot be virtualized. ref struct types (like Span<T> and ReadOnlySpan<T>) have restrictions on where they can live - they cannot be placed on the heap or in boxed form - which conflicts with the VM's stack model. Support for this case is planned.

Both limitations are technical rather than fundamental. Virtualization of these method types is possible in principle and is on the development roadmap.

When to use virtualization vs other techniques

The decision comes down to two questions: how valuable is the logic in this method, and how often does it run?

High value, low frequency - license checks, serial key validation, trial enforcement, activation logic, algorithms with significant commercial value that run occasionally. This is the ideal target for virtualization. Apply [VirtualizeCode] directly to these methods. The performance cost is invisible; the protection is as strong as .NET allows.

High value, high frequency - proprietary algorithms that run in tight loops or on every request. These require a judgment call. If the business impact of the IP being stolen outweighs the performance cost, virtualize and accept the overhead. If performance cannot be compromised, use control flow obfuscation instead - it provides meaningful protection at 2-5% overhead, far less than virtualization.

Low value, any frequency - utility methods, UI helpers, boilerplate. Renaming and string encryption are sufficient and cost nothing meaningful at runtime.

The most common mistake is applying [assembly: VirtualizeCode] to the entire assembly without exclusions. This works correctly - ArmDot handles it - but it virtualizes everything including compute-intensive code paths that do not need the strongest protection. The result is unnecessarily slow software. The smarter pattern is [assembly: VirtualizeCode] with targeted [VirtualizeCode(Enable = false)] exclusions on hot paths, or vice versa: no assembly-level attribute, with [VirtualizeCode] applied explicitly only to the methods that need it.

Back to: .NET Obfuscation Techniques: A Technical Overview →

Related: Unity C# Obfuscation → - how IL2CPP's native compilation compares to what code virtualization provides.

Related: Can Obfuscated .NET Code Be Reversed? → - code virtualization is the technique that sits specifically outside de4dot's effective range.

Protect your highest-value .NET code with ArmDot

ArmDot implements code virtualization with a unique VM encoding per method and per build, running on Windows, Linux, and macOS. The [VirtualizeCode] attribute integrates directly into your build via NuGet - no separate tool invocation required. A free trial is available; protected assemblies stop working after two weeks.